Main Content

Research agenda

Humans execute several eye movements per second, to project interesting objects onto the fovea, the central area of the retina that allows high-acuity vision. In our lab we study the interaction of eye movements and visual perception with respect to several aspects.

Reviews

- Stewart, E. E. M., Valsecchi, M., & Schütz, A. C. (2020). A review of interactions between peripheral and foveal vision. Journal of Vision, 20(12):2, 1-35. https://doi.org/10.1167/jov.20.12.2

- Souto, D., & Schütz, A. C. (2020). Task-relevance is causal in eye movement learning and adaptation. In Kara D. Federmeier & Elizabeth Schotter (Eds.), Gazing Toward the Future: Advances in Eye Movement Theory and Applications, 73, 157-193. Amsterdam: Elsevier. https://doi.org/10.1016/bs.plm.2020.06.002

- Schütz, A. C., Braun, D. I., & Gegenfurtner, K. R. (2011). Eye movements and perception: a selective review. Journal of Vision, 11(5):9, 1-30. http://dx.doi.org/doi:10.1167/11.5.9

Integration of bottom-up and top-down processing

For each eye movement, our brain has to trade-off different competing bottom-up and top-down signals, such as visual salience, object recognition, plans and value. We investigate how these different signals are integrated for eye movement control.

- Wolf, C., Wagner, I., & Schütz, A. C. (2019). Competition between salience and informational value for saccade adaptation. Journal of Vison, 19(14):26, 1–24. https://doi.org/10.1167/19.14.26

- Schütz, A. C., Lossin, F., & Gegenfurtner, K. R. (2015). Dynamic integration of information about salience and value for smooth pursuit eye movements. Vision Research, 113, 169-178. http://dx.doi.org/10.1016/j.visres.2014.08.009

- Schütz, A. C., Trommershäuser, & Gegenfurtner, K. R. (2012). Dynamic integration of information about salience and value for saccadic eye movements. Proceedings of the National Academy of Sciences of the United States of America, 109(19), 7547-7552. http://dx.doi.org/doi:10.1073/pnas.1115638109

Perceptual consequences of eye movements

The execution of an eye movement changes the visual input on the retina dramatically, but nevertheless we perceive the world as stable and homogeneous. Here we study how perception is modulated by the execution of eye movements and how visual information is maintained and integrated across eye movements.

- Braun, D. I., Schütz, A. C., & Gegenfurtner, K. R. (2021). Age effects on saccadic suppression of luminance and color. Journal of Vision, 21(6):11, 1–19. https://doi.org/10.1167/jov.21.6.11

- Wolf, C., & Schütz, A. C. (2015). Trans-saccadic integration of peripheral and foveal feature information is close to optimal. Journal of Vision, 16(16):1, 1-18. http://dx.doi.org/doi:10.1167/15.16.1

- Schütz, A. C., Braun, D. I., Kerzel, D., & Gegenfurtner, K. R. (2008). Improved visual sensitivity during smooth pursuit eye movements. Nature Neuroscience, 11(10), 1211-1216. http://dx.doi.org/10.1038/nn.2194

Learning and optimization

Eye movements not only belong to the most frequent, but also to the most accurate and precise movements of humans. We study how learning mechanisms maintain this high performance and how eye movements can be optimized for different perceptual tasks.

- Wagner, I., & Schütz, A. C. (2023). Interaction of dynamic error signals in saccade adaptation. Journal of Neurophysiology, 129, 1-18. https://doi.org/10.1152/jn.00419.2022

- Paeye, C., Schütz, A. C., & Gegenfurtner, K. R. (2016). Visual reinforcement shapes eye movements in visual search. Journal of Vision, 16(10):15, 1-15. http://dx.doi.org/10.1167/16.10.15

- Schütz, A. C., Kerzel, D., & Souto, D. (2014). Saccadic adaptation induced by a perceptual task. Journal of Vision, 14(5):4, 1-19. http://dx.doi.org/doi:10.1167/14.5.4

Individual differences in perceptual preferences

The visual system is often confronted with ambiguous visual input and has to choose between different possible interpretations of the sensory evidence at hand. Previous research showed that humans often exhibit perceptual preferences for specific interpretations. Here we study how these preferences are acquired and how they differ between individuals.

- Wexler, M., Mamassian, P., & Schütz, A. C. (2022). Structure of visual biases revealed by individual differences. Vision Research, 195, 108014. https://doi.org/10.1016/j.visres.2022.108014

- Schütz, A. C., & Mamassian, P. (2016). Early, local motion signals generate directional preferences in depth ordering of transparent motion. Journal of Vision, 16(10):24, 1-20. http://dx.doi.org/10.1167/16.10.24

- Schütz, A. C. (2014). Inter-individual differences in preferred directions of perceptual and motor decisions. Journal of Vision, 14(12):16, 1-17. http://dx.doi.org/doi:10.1167/14.12.16

Grants

Workshops

Laboratories

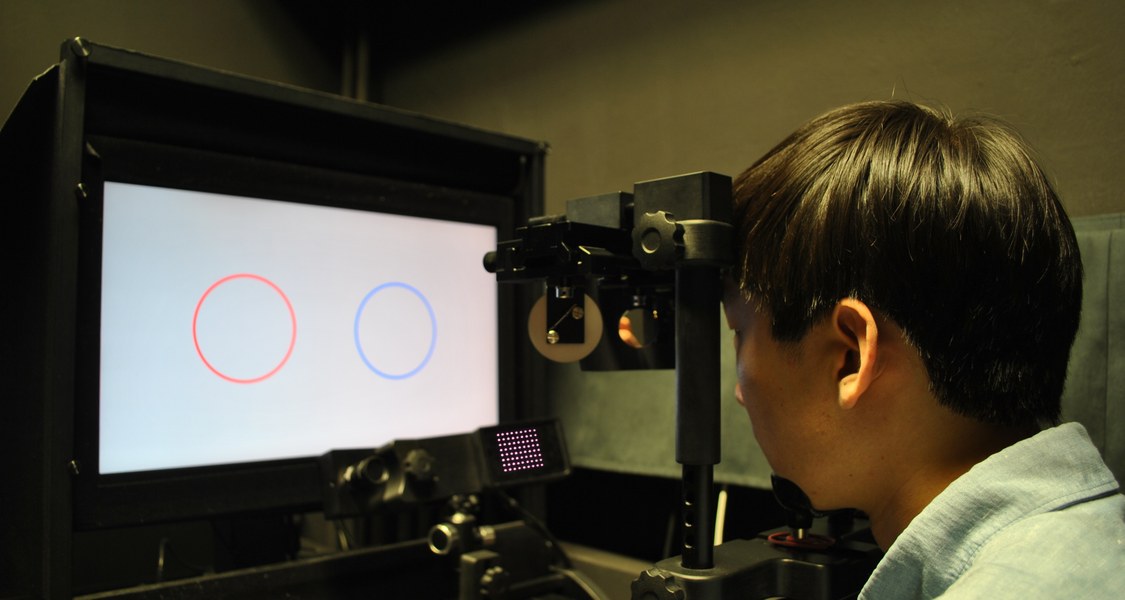

In our labs we study the interaction of eye movements and visual perception. We measure the eye movements of our participants while they are observing objects on a monitor and perform oculomotor and/or visual tasks.

Equipment in the "small" laboratory

Desktop-mounted EyeLink 1000+ (SR Research)

ViewPixx monitor (VPixx)

Four-mirror stereoscope

Equipment in the "large" laboratory

Desktop-mounted EyeLink 1000+ (SR Research)

QuadPixx projector (VPixx)

Rear projection screen (Stewart)

64-channel actiCHamp Plus EEG (Brain Products)

Equipment in the "far" laboratory

Desktop-mounted EyeLink 1000 (SR Research)

ViewPixx monitor (VPixx)

Two-mirror stereoscope